The Anatomy Of An A/B Test

Each A/B test has form, boundaries, with diverse shapes and sizes that can be designed, if we only choose to do so. Quite often, it is the variations and the ideas to be tested themselves which receive the most attention. The anatomy of an experiment however is equally important as it opens the testing process up for debate. The structure of an experiment will manifest itself whether we decide it consciously or not. Here are a number of characteristics that we began identifying which should help with the design of experiments and optimization strategies.

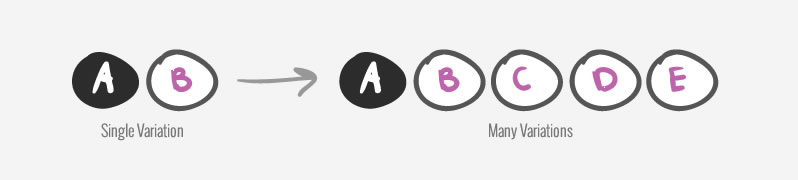

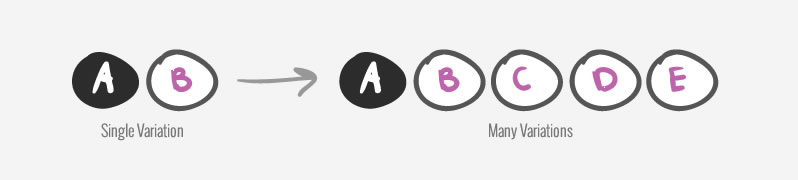

Number Of Variations

The number of variations in your experiment is a variable that you can determine. Essentially, you can choose to have 1 or many variations inside each experiment. Actually, you can even choose not to have any variations at all and just make changes to the live site. Generally, the more variations you have, the longer it might take for the test to reach results that are significant. Hence, tests with fewer variations are generally quicker in duration. More variations in each test tend to increase the chance of findings an effect (be it a false positive or not) and can be used as a way of managing risk.

Number Of Changes (Per Variation)

The number of changes is another variable that should be controlled for in an experiment. In each variation we can make a single change or multiple changes all at once. Having a single change variation has the benefit of isolating the cause of the effect (assuming one is found). Single changes often might take longer to generate insights (as the effect might be smaller) but are at the same time more reusable. There is however also a benefit to grouping multiple changes all at once into a single variation. Assuming that all changes are in fact an improvement, they often may carry a larger effect and therefore need less time to be confirmed. Here lies a classical conflict of interest between science and business. The first wishes to create granular and reusable knowledge, while the later aims for the fastest possible effect in the shortest amount of time.

Change Intensity

The intensity of each change we make for the purpose of a test is a characteristic in itself. As an example, we can design a button with medium, high or ultra high contrast and then try to measure the associated effects. Or we might test 1, 10, or 100 testimonials as a measure of social proof. Setting intensity is important because occasionally we might be falsely led to believe that there is no effect, when in fact we have simply forgotten to increase the strength of the change. Some tests will only show an effect for changes of a given threshold. Or even more interestingly, the effect might change in unpredictable ways at certain levels.

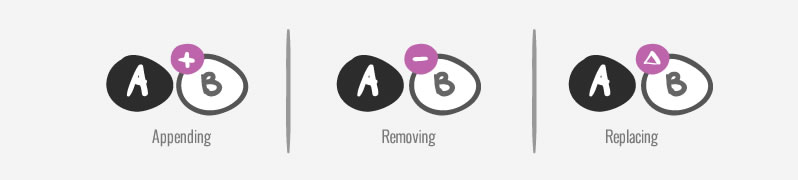

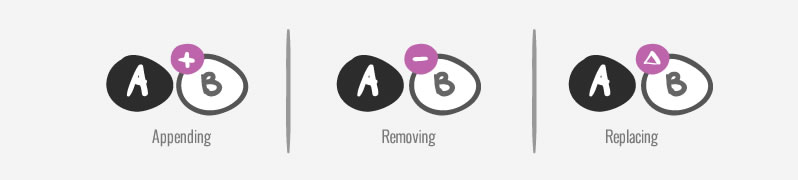

Change Type

The way we the actual change occurs in a given variation can be performed in at least three ways. We can append ideas without removing anything on the page. We can remove things from a given variation and measure the absence of something. Or we may replace one thing with another. All are valid change types which we should remain aware of when creating experiments. If we are careless with our changes, we might accidentally lose an element that has been performing quite well, only to see a lowered effect. Such a scenario does not exclude the possibility of still achieving a positive effect had the change been actually appended to the existing design instead.

Primary Metric Depth

Your metric can be shallow or deep. You can measure shallow goals such as clicks, and page visits, or you can measure deeper goals such as adds to cart, sales volume, revenue or even net profit. Typically, the deeper your measurements, although the closer they might be to the business goals, the less data you might have for them. Usually there are many more touch points that represent intent before a sale happens (ex: more signups or trials than purchases). Hence, sometimes trading off by choosing to measure a more shallow goal might be a valid testing approach. Be careful however of assuming that shallow clicks will always lead to deeper sales proportionally - which is often not the case.

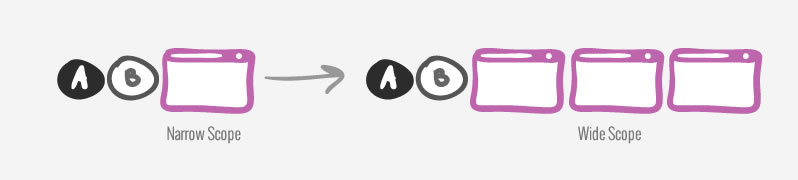

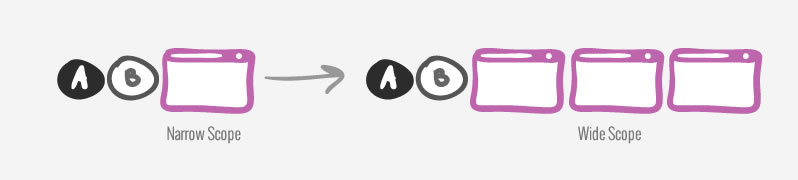

Test Scope

You can test on a single page or on multiple pages all at the same time. Perhaps at times you just care to test a single landing page. At other times, perhaps your test might stretch across a funnel, or even over a number of related sibling product pages. Usually, the narrower the testing scope, the faster it is possible to detect an effect (as wider funnel tests tend to dilute the conversion baseline with additional traffic). How wide your test spans in terms of scope is an interesting characteristic to control for.

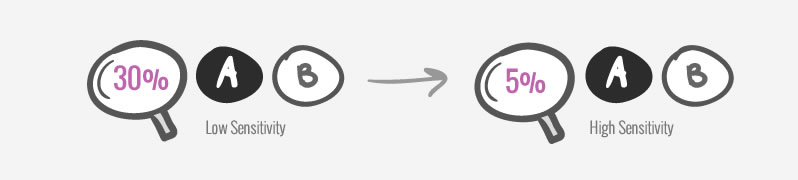

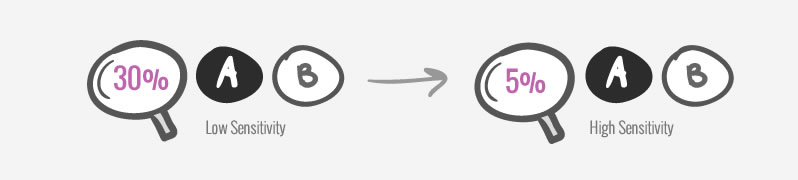

Test Sensitivity

The way an A/B test is estimated and setup (depending on its sample size and baseline conversion rate), it will have sensitivity only to detect a corresponding minimum effect or higher. As we aim for more sensitive and higher powered tests, the longer we’ll need to run the test for, the fewer variations we’ll need to have, the higher the baseline conversion rate will need to be, or the more traffic that will be needed. Test sensitivity needs to be established before a test is started in order to ensure that the desired minimum effect will be detected, if it even exists. Comparatively, less sensitive tests will of course require a shorter testing duration, but might not even detect a smaller effect if it does exist.

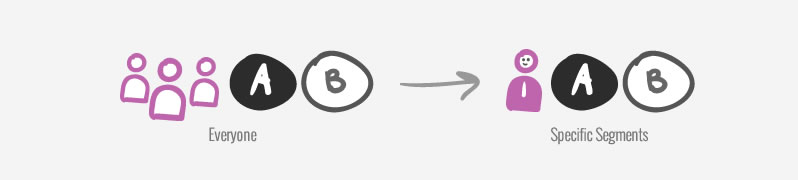

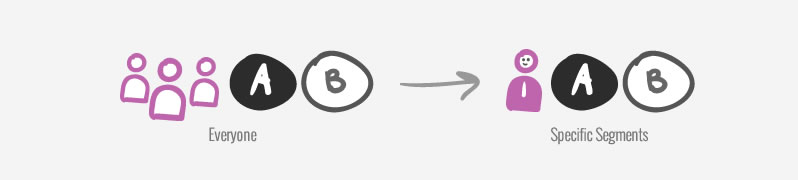

Segment Type

We can test with all traffic or we can test with a specific type of segment. One benefit of segmenting your tests is that a specific message, offer or interaction may typically be more effective with a targeted audience. Segmentation can also be used to “artificially” adjust or "correct" the conversion baseline. So if you are running a test to increase a signup rate, it might be wise to exclude existing users who have already signed up in the past. This has the added benefit of “raising” the conversion rate baseline higher, as existing users who obviously will not signup (again) are excluded. Testing with a higher baseline then carries a benefit of a shorter testing duration.

Design Your Experiments

Becoming aware of the characteristics of the tests which you create is quite important. Designing your a/b tests as you design the variations themselves, will open up the world of testing strategies. Finding the right testing strategy for various situations, in turn is a stepping stone in finding optimums more effectively.

Reach Higher Conversions Faster

Get conversion patterns that are based on real tests. Repeat what worked for others.

Each A/B test has form, boundaries, with diverse shapes and sizes that can be designed, if we only choose to do so. Quite often, it is the variations and the ideas to be tested themselves which receive the most attention. The anatomy of an experiment however is equally important as it opens the testing process up for debate. The structure of an experiment will manifest itself whether we decide it consciously or not. Here are a number of characteristics that we began identifying which should help with the design of experiments and optimization strategies.

Number Of Variations

The number of variations in your experiment is a variable that you can determine. Essentially, you can choose to have 1 or many variations inside each experiment. Actually, you can even choose not to have any variations at all and just make changes to the live site. Generally, the more variations you have, the longer it might take for the test to reach results that are significant. Hence, tests with fewer variations are generally quicker in duration. More variations in each test tend to increase the chance of findings an effect (be it a false positive or not) and can be used as a way of managing risk.

Number Of Changes (Per Variation)

The number of changes is another variable that should be controlled for in an experiment. In each variation we can make a single change or multiple changes all at once. Having a single change variation has the benefit of isolating the cause of the effect (assuming one is found). Single changes often might take longer to generate insights (as the effect might be smaller) but are at the same time more reusable. There is however also a benefit to grouping multiple changes all at once into a single variation. Assuming that all changes are in fact an improvement, they often may carry a larger effect and therefore need less time to be confirmed. Here lies a classical conflict of interest between science and business. The first wishes to create granular and reusable knowledge, while the later aims for the fastest possible effect in the shortest amount of time.

Change Intensity

The intensity of each change we make for the purpose of a test is a characteristic in itself. As an example, we can design a button with medium, high or ultra high contrast and then try to measure the associated effects. Or we might test 1, 10, or 100 testimonials as a measure of social proof. Setting intensity is important because occasionally we might be falsely led to believe that there is no effect, when in fact we have simply forgotten to increase the strength of the change. Some tests will only show an effect for changes of a given threshold. Or even more interestingly, the effect might change in unpredictable ways at certain levels.

Change Type

The way we the actual change occurs in a given variation can be performed in at least three ways. We can append ideas without removing anything on the page. We can remove things from a given variation and measure the absence of something. Or we may replace one thing with another. All are valid change types which we should remain aware of when creating experiments. If we are careless with our changes, we might accidentally lose an element that has been performing quite well, only to see a lowered effect. Such a scenario does not exclude the possibility of still achieving a positive effect had the change been actually appended to the existing design instead.

Primary Metric Depth

Your metric can be shallow or deep. You can measure shallow goals such as clicks, and page visits, or you can measure deeper goals such as adds to cart, sales volume, revenue or even net profit. Typically, the deeper your measurements, although the closer they might be to the business goals, the less data you might have for them. Usually there are many more touch points that represent intent before a sale happens (ex: more signups or trials than purchases). Hence, sometimes trading off by choosing to measure a more shallow goal might be a valid testing approach. Be careful however of assuming that shallow clicks will always lead to deeper sales proportionally - which is often not the case.

Test Scope

You can test on a single page or on multiple pages all at the same time. Perhaps at times you just care to test a single landing page. At other times, perhaps your test might stretch across a funnel, or even over a number of related sibling product pages. Usually, the narrower the testing scope, the faster it is possible to detect an effect (as wider funnel tests tend to dilute the conversion baseline with additional traffic). How wide your test spans in terms of scope is an interesting characteristic to control for.

Test Sensitivity

The way an A/B test is estimated and setup (depending on its sample size and baseline conversion rate), it will have sensitivity only to detect a corresponding minimum effect or higher. As we aim for more sensitive and higher powered tests, the longer we’ll need to run the test for, the fewer variations we’ll need to have, the higher the baseline conversion rate will need to be, or the more traffic that will be needed. Test sensitivity needs to be established before a test is started in order to ensure that the desired minimum effect will be detected, if it even exists. Comparatively, less sensitive tests will of course require a shorter testing duration, but might not even detect a smaller effect if it does exist.

Segment Type

We can test with all traffic or we can test with a specific type of segment. One benefit of segmenting your tests is that a specific message, offer or interaction may typically be more effective with a targeted audience. Segmentation can also be used to “artificially” adjust or "correct" the conversion baseline. So if you are running a test to increase a signup rate, it might be wise to exclude existing users who have already signed up in the past. This has the added benefit of “raising” the conversion rate baseline higher, as existing users who obviously will not signup (again) are excluded. Testing with a higher baseline then carries a benefit of a shorter testing duration.

Design Your Experiments

Becoming aware of the characteristics of the tests which you create is quite important. Designing your a/b tests as you design the variations themselves, will open up the world of testing strategies. Finding the right testing strategy for various situations, in turn is a stepping stone in finding optimums more effectively.

Reach Higher Conversions Faster

Get conversion patterns that are based on real tests. Repeat what worked for others.

Jakub Linowski on Aug 06, 2015

Jakub Linowski on Aug 06, 2015

Comments

Chris Goward 11 years ago ↑0↓0

This is a very good summary of the types of variables in A/B tests, Jakub. Thanks for sharing!

At WiderFunnel, we also spend a lot of effort on the "Design of Experiments" topic: planning how experiments can best be planned to achieve the best balance between (a) revenue lift and (b) insights.

In terms of your "Number of Changes" per variation, we refer to many changes as "variable cluster variations" and single changes as "isolation variations." Variable cluster variations are generally intended to maximize (a) and isolation variations are aimed at (b).

Within those experiment types, the change type, intensity, scope, and segmentation can be explored.

For more on the topic, you may be interested in an article Alhan Keser wrote recently outlining how Factorial Experiment Design allows for both of these overarching goals: http://www.widerfunnel.com/conversion-rate-optimization/design-your-tests-to-get-consistently-better-results

Feel free to add your thoughts there too!

Cheers,

Chris

Reply

Jakub Linowski 11 years ago ↑0↓0

Hey Chris. Thanks for the comment. Super glad there is some similarity in how your team thinks about a/b testing - especially with the struggle between revenue and insights. :)

As for your factorial experiment design, that seems interesting as well. Once people start thinking how one test feeds into the next one, that opens up so much room for creativity.

Good stuff!

Jakub

Reply

Sonia Pitt 11 years ago ↑0↓0

This helped me to ideate a new idea, dying to explore it on coming week. Thanks for this rich post. Going to share it with my audience.

Regards

Sonia

Reply

Jakub Linowski 11 years ago ↑1↓0

I'd be curious to hear the language that you data focused designers, marketers, UI designers, optimizers, and growth hackers out there use when you design your own experiments. Please share ...

Reply