How We Prioritize Testing Ideas For Highest Winning Certainty Using Google Sheets

In the beginning of a project, we usually generate anywhere between 5 to 40 testing ideas inspired by a combination of sources and individuals. Once we have this list of ideas we prefer to be in a position where we can see the ones that have: the highest certainty of winning, the largest effect potential, or ones which take the least effort. Watch this short video screencast on how we prioritize testing ideas using Google Sheets while avoiding working on the first thing that comes to mind.

The Columns We Typically Use

- IDEAS a short 2-3 word idea title

- PRIMARY METRIC the metric to increase (Sales, Signups, Revenue, etc.)

- DESCRIPTION helping everyone understand the change (or variations) in more detail

- SCOPE the starting page(s) for the test (including any segments if applicable)

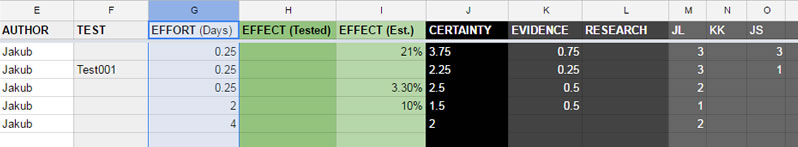

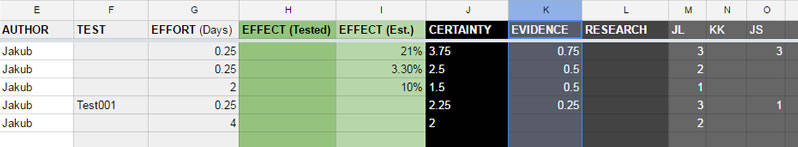

- AUTHOR the person that can explain the idea further if needed to

- TEST a test code (sometimes linked) to a real test that was run

- EFFORT an estimate of days for how much time is needed to build out the test

- EFFECT (Tested) a measured effect outcome from a real test

- EFFECT (Estimated) an effect prediction before running a test (based on past test data)

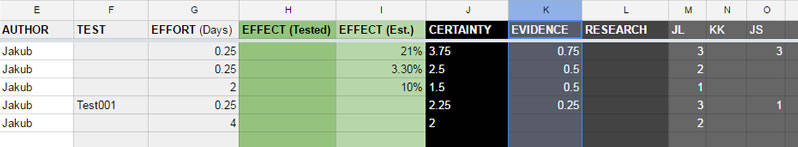

- CERTAINTY auto-calculated total certainty that the test will lead to a win (higher = better)

- EVIDENCE evidence based certainty measured from past tests

- RESEARCH research based certainty (extra points for qualitative or customer research)

- SUBJECTIVE CERTAINTY each individual on the team can express their certainty between -3 and 3 which is then averaged out

5 Useful Ways To Sort This Google Sheet

When we progress through an optimization project, we might apply different testing strategies at different points in time. Sorting these sheets offers us different views of the data which we then use to decide what to focus on next. Here are 5 such views which we find useful.

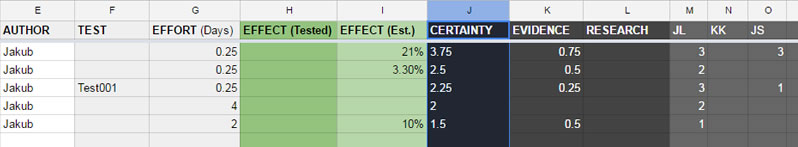

Highest Total Certainty Ideas First

Seeing what will win with the highest certainty is our most important view. Certainty to us is a prediction of whether a test will lead to a win or not. It is a net count derived from adding evidence-based certainty (past a/b tests that have lead to wins) with subjective certainty (from multiple individuals / capped at a maximum of 3).

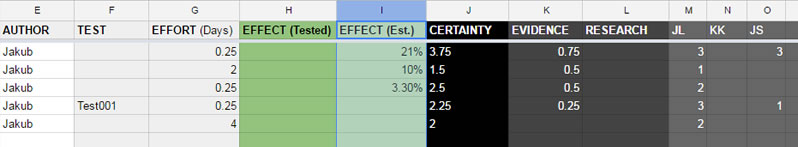

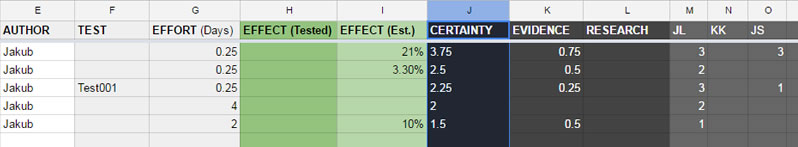

Highest Predicted Effect First

When we reply on past a/b tests to predict the outcome of future tests, we also look at median effects from similar tests. When we have such data at hand, we tend to include it in our sheet. Sorting by this columns, gives us a window into which tests might bring the highest gains. (sometimes we glance over the certainty column to remind ourselves of how many tests this is based on).

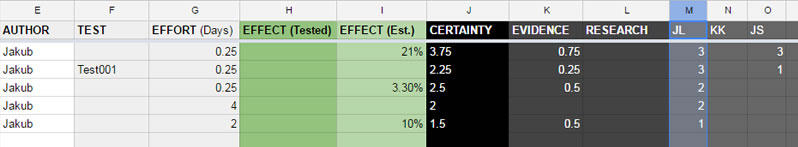

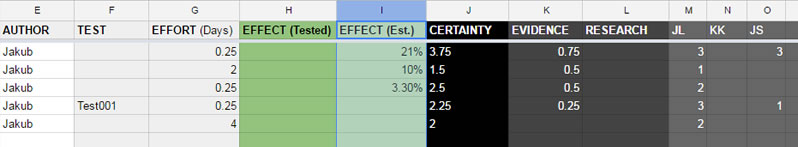

An Individual's Highest Certainty Ideas

Some people on the team may have more experience than others and we might sort the list by an individual person's expressed subjective certainty (reminder: it's between -3 and 3). For example, we might sort ideas by what a client thinks are the best ideas. Some project leaders may have deeper background knowledge, or they might have already tried something similar in the past. Keeping individual guesses separate while looking for large discrepancies is also a great conversation starter (why does one person believe an idea is terrible and another thinks that the same idea is great?).

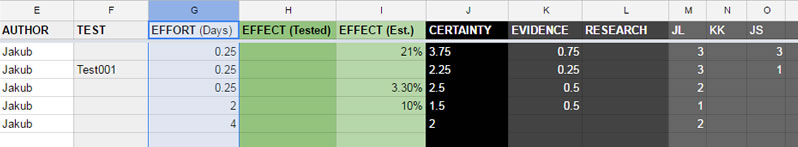

Lowest Effort First

High effort test ideas can stall an optimization project - it happened to us a few times already. For this reason, it's good to see which ideas are simpler and faster to execute. Focusing on easier testing ideas is especially true in the beginning of a project when building momentum and trust.

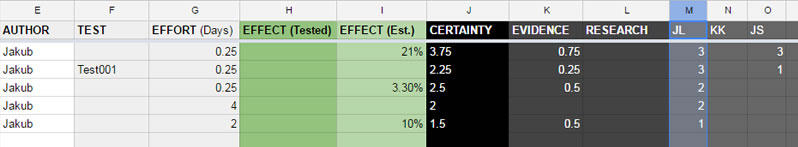

Highest Evidence-Based Certainty Ideas First

Although we trust our team's subjective guesses, sometimes it's nice to focus on only those testing ideas which have real a/b test data to back them up. For this reason we sort by the Evidence column which contains evidence-based certainty (derived from the amount and strength of similar tests which also won).

Best Sources Of Evidence Based Certainty For You To Use

This approach to prioritization is clearly based on past data from existing a/b tests - the more of which we have, the higher our certainty that wins will follow. So where can you find such tests to use?

- You can gather certainty data from your own tests and calculate it yourself

- You can look through a/b tests which people are nice enough to share publicly

- You can speed things up and use our conversion patterns which contain all the searching, finding, comparing and analysis for you. We look at many tests and give you certainty counts that you can copy and paste on your prioritization projects.

View & Copy The Google Sheets Template To Use It On Your Projects

In the beginning of a project, we usually generate anywhere between 5 to 40 testing ideas inspired by a combination of sources and individuals. Once we have this list of ideas we prefer to be in a position where we can see the ones that have: the highest certainty of winning, the largest effect potential, or ones which take the least effort. Watch this short video screencast on how we prioritize testing ideas using Google Sheets while avoiding working on the first thing that comes to mind.

The Columns We Typically Use

- IDEAS a short 2-3 word idea title

- PRIMARY METRIC the metric to increase (Sales, Signups, Revenue, etc.)

- DESCRIPTION helping everyone understand the change (or variations) in more detail

- SCOPE the starting page(s) for the test (including any segments if applicable)

- AUTHOR the person that can explain the idea further if needed to

- TEST a test code (sometimes linked) to a real test that was run

- EFFORT an estimate of days for how much time is needed to build out the test

- EFFECT (Tested) a measured effect outcome from a real test

- EFFECT (Estimated) an effect prediction before running a test (based on past test data)

- CERTAINTY auto-calculated total certainty that the test will lead to a win (higher = better)

- EVIDENCE evidence based certainty measured from past tests

- RESEARCH research based certainty (extra points for qualitative or customer research)

- SUBJECTIVE CERTAINTY each individual on the team can express their certainty between -3 and 3 which is then averaged out

5 Useful Ways To Sort This Google Sheet

When we progress through an optimization project, we might apply different testing strategies at different points in time. Sorting these sheets offers us different views of the data which we then use to decide what to focus on next. Here are 5 such views which we find useful.

Highest Total Certainty Ideas First

Seeing what will win with the highest certainty is our most important view. Certainty to us is a prediction of whether a test will lead to a win or not. It is a net count derived from adding evidence-based certainty (past a/b tests that have lead to wins) with subjective certainty (from multiple individuals / capped at a maximum of 3).

Highest Predicted Effect First

When we reply on past a/b tests to predict the outcome of future tests, we also look at median effects from similar tests. When we have such data at hand, we tend to include it in our sheet. Sorting by this columns, gives us a window into which tests might bring the highest gains. (sometimes we glance over the certainty column to remind ourselves of how many tests this is based on).

An Individual's Highest Certainty Ideas

Some people on the team may have more experience than others and we might sort the list by an individual person's expressed subjective certainty (reminder: it's between -3 and 3). For example, we might sort ideas by what a client thinks are the best ideas. Some project leaders may have deeper background knowledge, or they might have already tried something similar in the past. Keeping individual guesses separate while looking for large discrepancies is also a great conversation starter (why does one person believe an idea is terrible and another thinks that the same idea is great?).

Lowest Effort First

High effort test ideas can stall an optimization project - it happened to us a few times already. For this reason, it's good to see which ideas are simpler and faster to execute. Focusing on easier testing ideas is especially true in the beginning of a project when building momentum and trust.

Highest Evidence-Based Certainty Ideas First

Although we trust our team's subjective guesses, sometimes it's nice to focus on only those testing ideas which have real a/b test data to back them up. For this reason we sort by the Evidence column which contains evidence-based certainty (derived from the amount and strength of similar tests which also won).

Best Sources Of Evidence Based Certainty For You To Use

This approach to prioritization is clearly based on past data from existing a/b tests - the more of which we have, the higher our certainty that wins will follow. So where can you find such tests to use?

- You can gather certainty data from your own tests and calculate it yourself

- You can look through a/b tests which people are nice enough to share publicly

- You can speed things up and use our conversion patterns which contain all the searching, finding, comparing and analysis for you. We look at many tests and give you certainty counts that you can copy and paste on your prioritization projects.

Jakub Linowski on Nov 20, 2016

Jakub Linowski on Nov 20, 2016

Comments