Overcoming Fears Of Your First Experiment

Those who run A/B tests sometimes forget how scary it might have been in getting started with the first online experiment. Such fears, big or small, can irrationally discourage us from discovering more effective design ideas. I’m going to identify and address some of these fears along with ways to overcome them as I think that anyone is better off testing than not. The experimental world of seeking effects (both positive and negative) is a very exciting and rewarding one.

The Fear Of Uncertainty

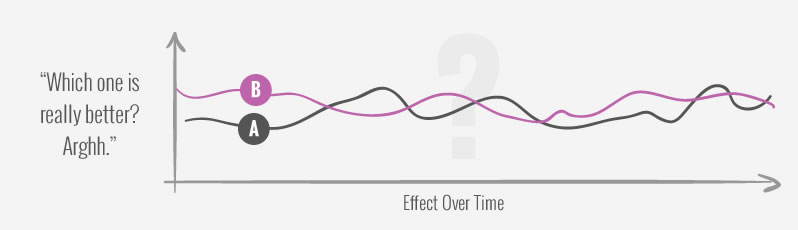

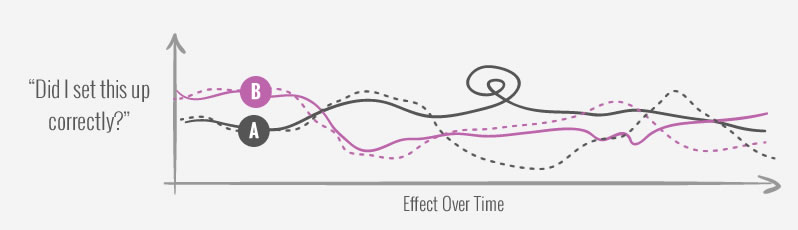

Human beings like certainty and by not running any tests you may feel as if your most recent version is your best one - even if that is only an illusion. The moment your experiment is launched, you are automatically placed into a state of not knowing which of your variations are better. To make things worse, the early days or weeks of an experiment can especially be uncertainty as they are ruled by chance. Experiments typically start off with illusionary winners flipping into losers back and forth chaotically before the true effects begin to stabilize. Such uncertainty may be uncomfortable for some. Don't let this stop you, because:

-

Test Results & Implementation Decisions Are Separate

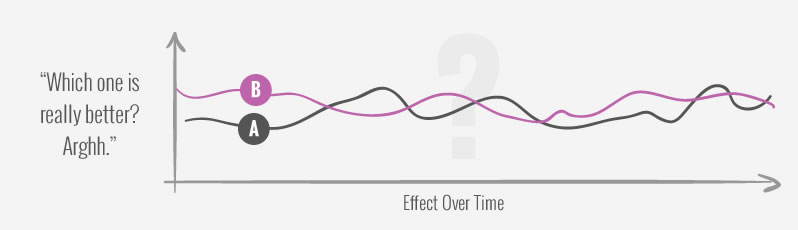

As more and more data is collected, experiments actually generate certainty (or remove uncertainty). Experiments may start off with lots of uncertainty, but over the course of time their effects become more and more defined as chance gives way to a truer and truer effect. Uncertainty is a temporary state which gradually passes, so keep that experiment running.

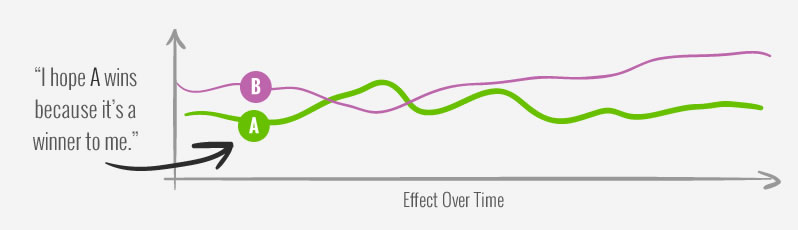

The Fear Of Having To Implement An Undesired Win

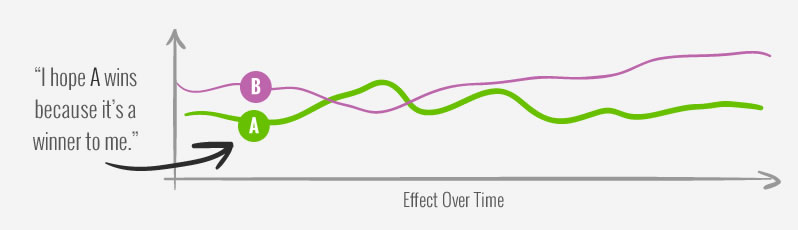

Some experiments test variations which we might already believe to be better for some specific business reason by some specific measure. We might be asked to run such experiments where we don’t want certain variations to win. In these situations, running the experiment may feel uncomfortable as it may feel like we have to implement an undesired outcome. Don't let this stop you, because:

-

You Don’t Have To Implement A Winner

The test results and implementation decisions are always separate. In simplest terms, strong test results with meaningful business metrics should be highly considered for implementation. But in the end, you or your team decides whether to implement a given variation or not. You are in control. A single test provides a single piece of evidence and your own past knowledge should be factored into the implementation decision. If you believe that a successful test can hurt some other metric, you should always raise that as an issue before or after a test.

-

You Can Always Iterate A Test

The outcome of an experiment is not final. You can always design new experiments to follow up, challenge or retest winners if you think you have better ideas - and you should. Chances are there is a better variations around the corner.

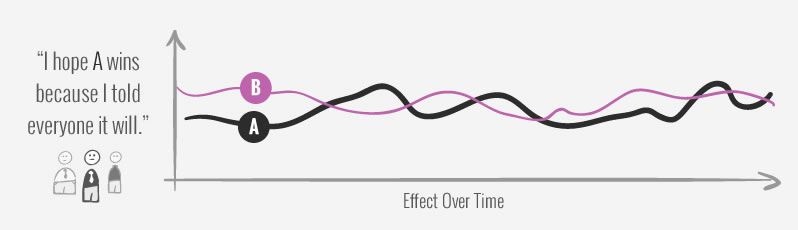

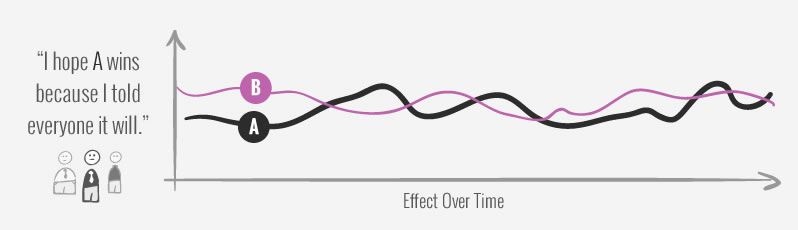

The Fear Of Being Wrong

We all make predictions some of which we might announce to our peers. In such cases experiments might feel as if we are the ones being tested. Now what if that variation we said will win does not win? Being wrong can feel frightening. Don’t let this stop you, because:

-

It’s Less About You And More About The Net Outcome

It’s completely normal that you will encounter effects in your tests ranging from strong losers to strong winners. And when you start with experimentation, you will test ideas that you think are great only to find that they are not - that’s part of the process. As you continue testing however, winners will also emerge and that’s what people will focus on. The net outcome of your optimization program will deliver value with losing variations being pushed away.

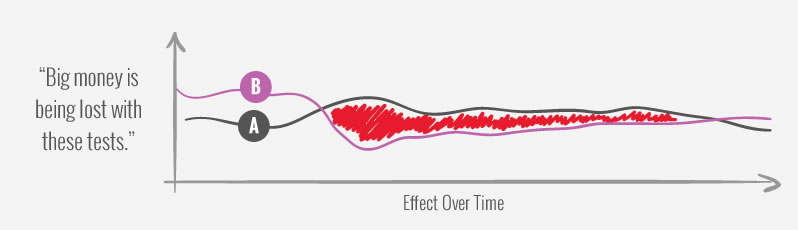

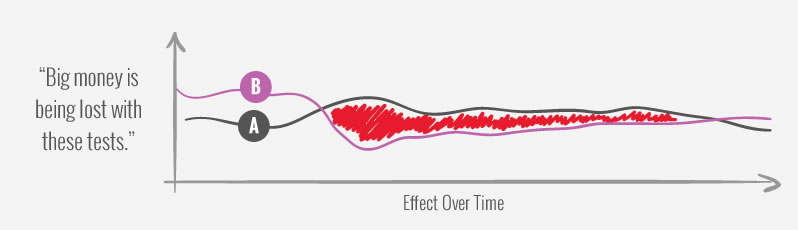

The Fear Of Losing Profits

If you want to run experiments that impact the bottom line, you will need to run tests that affect business metrics such as sales and revenue. This can feel frightening when combined with the possibility of negative effects. We’ve observed quite a few times where a client with access to a test link would ask to stop a test with a -10% or -20% drop in sales. Don’t let this stop you, because:

-

Early Effects Are Inflated And Regress

If you see huge drops in sales in the beginning of an experiment, chances are it’s just a wide confidence interval. Most experiments have exaggerated effects when the number of conversions and visitors are low. Typically those plus or minus 100% gains or losses are only artificial and stabilize over time (regress). So give them time.

-

You Can Setup Stop Rules

You can predefine a set of stopping rules for losing variations that you can feel comfortable with. This will remove the emotion out of the equation. We typically begin to worry about losses after we have at least 100+ conversion with larger than -10% loses that maintain themselves.

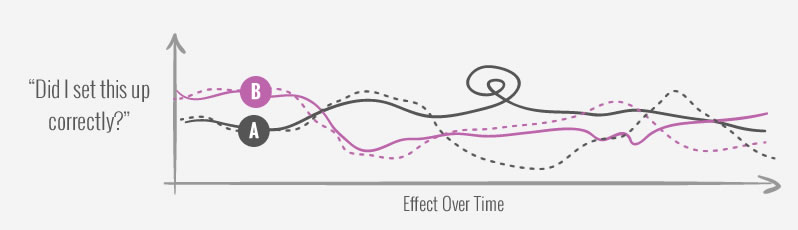

The Fear Of Setting Up The Experiment

When setting up an experiment for the first time, the technical aspects may overwhelm some people. Will the data be measured accurately? Is it difficult to getting it started? Won’t the experiment break something else? Don’t let this stop you, because:

-

It’s Easy To Run Simple Tests

Many popular a/b testing tools (ex: Visual Website Optimizer) make basic experimental setup easy enough to get started. They take care of assigning random variations, tracking metrics and even making suggestions when you might have enough data. As a starting point these tools are good enough.

-

The More Experiments You Run The Better You’ll Get

Like with anything else, the more of it you do, the better you’ll get just by experience. We also created a small guide for running more accurate tests as well - it's completely free.

Know Of Other Fears? Share Them Right Here

Please add any other fears which you think might exist around running a/b tests.

Those who run A/B tests sometimes forget how scary it might have been in getting started with the first online experiment. Such fears, big or small, can irrationally discourage us from discovering more effective design ideas. I’m going to identify and address some of these fears along with ways to overcome them as I think that anyone is better off testing than not. The experimental world of seeking effects (both positive and negative) is a very exciting and rewarding one.

The Fear Of Uncertainty

Human beings like certainty and by not running any tests you may feel as if your most recent version is your best one - even if that is only an illusion. The moment your experiment is launched, you are automatically placed into a state of not knowing which of your variations are better. To make things worse, the early days or weeks of an experiment can especially be uncertainty as they are ruled by chance. Experiments typically start off with illusionary winners flipping into losers back and forth chaotically before the true effects begin to stabilize. Such uncertainty may be uncomfortable for some. Don't let this stop you, because:

-

Test Results & Implementation Decisions Are Separate

As more and more data is collected, experiments actually generate certainty (or remove uncertainty). Experiments may start off with lots of uncertainty, but over the course of time their effects become more and more defined as chance gives way to a truer and truer effect. Uncertainty is a temporary state which gradually passes, so keep that experiment running.

The Fear Of Having To Implement An Undesired Win

Some experiments test variations which we might already believe to be better for some specific business reason by some specific measure. We might be asked to run such experiments where we don’t want certain variations to win. In these situations, running the experiment may feel uncomfortable as it may feel like we have to implement an undesired outcome. Don't let this stop you, because:

-

You Don’t Have To Implement A Winner

The test results and implementation decisions are always separate. In simplest terms, strong test results with meaningful business metrics should be highly considered for implementation. But in the end, you or your team decides whether to implement a given variation or not. You are in control. A single test provides a single piece of evidence and your own past knowledge should be factored into the implementation decision. If you believe that a successful test can hurt some other metric, you should always raise that as an issue before or after a test.

-

You Can Always Iterate A Test

The outcome of an experiment is not final. You can always design new experiments to follow up, challenge or retest winners if you think you have better ideas - and you should. Chances are there is a better variations around the corner.

The Fear Of Being Wrong

We all make predictions some of which we might announce to our peers. In such cases experiments might feel as if we are the ones being tested. Now what if that variation we said will win does not win? Being wrong can feel frightening. Don’t let this stop you, because:

-

It’s Less About You And More About The Net Outcome

It’s completely normal that you will encounter effects in your tests ranging from strong losers to strong winners. And when you start with experimentation, you will test ideas that you think are great only to find that they are not - that’s part of the process. As you continue testing however, winners will also emerge and that’s what people will focus on. The net outcome of your optimization program will deliver value with losing variations being pushed away.

The Fear Of Losing Profits

If you want to run experiments that impact the bottom line, you will need to run tests that affect business metrics such as sales and revenue. This can feel frightening when combined with the possibility of negative effects. We’ve observed quite a few times where a client with access to a test link would ask to stop a test with a -10% or -20% drop in sales. Don’t let this stop you, because:

-

Early Effects Are Inflated And Regress

If you see huge drops in sales in the beginning of an experiment, chances are it’s just a wide confidence interval. Most experiments have exaggerated effects when the number of conversions and visitors are low. Typically those plus or minus 100% gains or losses are only artificial and stabilize over time (regress). So give them time.

-

You Can Setup Stop Rules

You can predefine a set of stopping rules for losing variations that you can feel comfortable with. This will remove the emotion out of the equation. We typically begin to worry about losses after we have at least 100+ conversion with larger than -10% loses that maintain themselves.

The Fear Of Setting Up The Experiment

When setting up an experiment for the first time, the technical aspects may overwhelm some people. Will the data be measured accurately? Is it difficult to getting it started? Won’t the experiment break something else? Don’t let this stop you, because:

-

It’s Easy To Run Simple Tests

Many popular a/b testing tools (ex: Visual Website Optimizer) make basic experimental setup easy enough to get started. They take care of assigning random variations, tracking metrics and even making suggestions when you might have enough data. As a starting point these tools are good enough.

-

The More Experiments You Run The Better You’ll Get

Like with anything else, the more of it you do, the better you’ll get just by experience. We also created a small guide for running more accurate tests as well - it's completely free.

Know Of Other Fears? Share Them Right Here

Please add any other fears which you think might exist around running a/b tests.

Jakub Linowski on Dec 19, 2016

Jakub Linowski on Dec 19, 2016

Comments

Lisan 7 years ago ↑1↓0

Dear Jakub,

I bumped into your article about overcoming fears of your first experiment. I liked reading it a lot!

At Angistudio we put a lot of time in experimenting and helping others to overcome this fear. I think I might have an interesting link which can be of added value for your blog post! Lately, we developed a tool to help designers or other beginners in the UX field to get started with experimenting. The tool can be found here: https://www.angistudio.com/tools/lean-experiment-test-card/.

Do you think this could be of added value for your blog post? Would you be interested in linking to our tool?

Kind regards,

Lisan Ganzevles

Reply